Serving Private S3 Objects: Backend Proxy vs Gateway Auth vs Presigned URLs

Applications with private files need an authorization boundary in front of S3-compatible storage. In this article, we focus on how to serve private objects and compare three access patterns for S3-compatible storage backends (using Garage as an example) running on Kubernetes:

- Backend proxy

- Gateway authorization with SigV4 signing

- Presigned URLs

The architectural question is simple: where is the access decision enforced, and who transfers the object bytes?

This post is for: Engineers building applications with private or user-scoped files in S3-compatible storage who need a precise decision framework for choosing an access pattern.

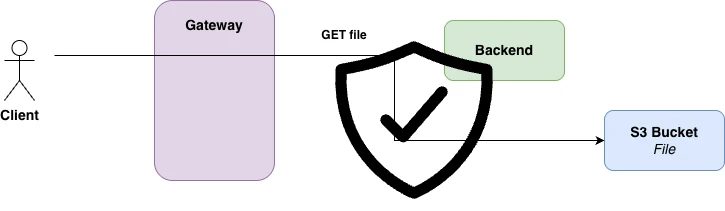

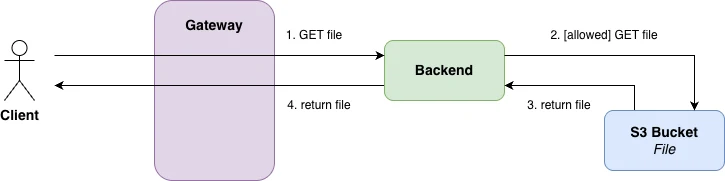

Pattern 1: Backend Proxy

Concept

In the backend proxy pattern, the application authenticates the user, determines which object is allowed, fetches that object from S3 itself, and streams the response back to the client.

The client never talks to S3 directly. The backend is both the authorization point and the data path.

This gives the application full control over access rules. It is therefore the most direct fit when authorization depends on application state such as ownership, project membership, subscription tier, legal hold, or any other business rule not naturally represented in the storage layer.

The trade-off is equally clear: every file download consumes backend resources. For large files or high download volume, the application becomes an unnecessary bottleneck.

Implementation from the repository

@router.get("/api/01-backend-proxy/file/{file_id}")def get_file(file_id: str, username: str = Depends(get_current_user)): bucket = bucket_for(username) client = get_s3_client()

response = client.get_object(Bucket=bucket, Key=file_id) content_type = response["ContentType"] if "ContentType" in response else "application/octet-stream" return StreamingResponse(response["Body"], media_type=content_type)The key property is bucket_for(username). The authenticated user determines which bucket is queried. The backend never exposes S3 credentials to the client and never delegates access directly.

Properties

- strongest application-level control

- simplest operational model

- easiest to debug

- highest backend bandwidth and connection cost

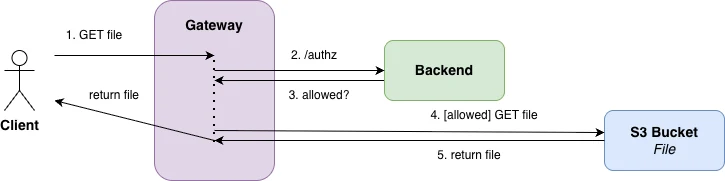

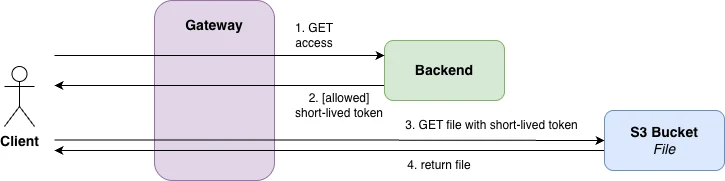

Pattern 2: Gateway Authorization

Concept

In the gateway authorization pattern, the client sends the file request to the gateway rather than the backend. The gateway calls an authorization service, the backend validates the JWT, generates SigV4 headers for the S3 request, and the gateway forwards the request to S3.

The backend is no longer in the object data path. It becomes an authorization and signing component.

This pattern separates control from transfer: the application decides whether the request should proceed, but the gateway and storage system carry the payload.

That separation improves scalability, but it also increases architectural complexity. The system now depends on correct route rewriting, correct header forwarding, correct canonical host handling for SigV4, and correct gateway policy configuration.

Implementation from the repository

The signing happens in the function:

def _sigv4_headers(s3_path: str) -> dict: creds = Credentials(S3_ACCESS_KEY, S3_SECRET_KEY) req = AWSRequest(method="GET", url=f"{S3_ENDPOINT}{s3_path}") req.headers["Host"] = _S3_HOST SigV4Auth(creds, "s3", S3_REGION).add_auth(req) return { "Authorization": req.headers["Authorization"], "X-Amz-Date": req.headers["X-Amz-Date"], "X-Amz-Content-Sha256": req.headers.get( "X-Amz-Content-Sha256", "e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855", ), }The gateway policy calls that authz endpoint and forwards the generated headers:

spec: extAuth: headersToExtAuth: - authorization http: backendRefs: - name: { { include "example-02.fullname" . } } namespace: example-02 port: { { .Values.service.port } } path: /api/02-gateway-auth/authz headersToBackend: - Authorization - X-Amz-Date - X-Amz-Content-Sha256The repository also needs an EnvoyPatchPolicy to rewrite the upstream Host header so that Garage accepts the SigV4 signature. That detail is not incidental. It is exactly the kind of protocol-level coupling that makes this approach harder to operate correctly.

Properties

- backend removed from file transfer path

- centralized enforcement at the gateway layer

- highest infrastructure and debugging complexity

- strongest dependence on gateway behavior and operator maturity

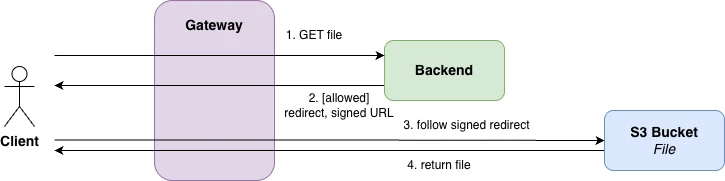

Pattern 3: Presigned URLs

Concept

In the presigned URL pattern, the backend authenticates the user and decides whether access is allowed, but instead of fetching the object itself, it generates a short-lived signed URL and redirects the client to S3.

The backend performs the authorization decision once. S3 serves the file directly afterward.

This keeps application logic in control while removing the backend from the transfer path. In practice, it is often the most efficient compromise between simplicity and scalability.

The security model is different from a backend proxy. A presigned URL is a bearer artifact: anyone holding the URL can use it until it expires. That is usually acceptable for short TTLs, but it is a real property of the model and should be stated precisely.

Implementation from the repository

@router.get("/api/03-presigned-uri/file/{file_id}")def get_presigned_url(file_id: str, username: str = Depends(get_current_user)): bucket = bucket_for(username) client = get_s3_client()

url = client.generate_presigned_url( "get_object", Params={"Bucket": bucket, "Key": file_id}, ExpiresIn=PRESIGNED_URL_TTL, )

return RedirectResponse(url=url, status_code=302)Here the backend still decides the bucket and key, but the actual payload no longer traverses the application service. The repository sets the presigned URL TTL via PRESIGNED_URL_TTL_SECONDS, with 30 seconds as the default.

Properties

- backend keeps the authorization decision

- S3 serves the file directly

- substantially lower backend transfer cost than proxying

- delegated access remains valid until URL expiry

Comparison Matrix

| Aspect | Backend Proxy | Gateway Auth | Presigned URL |

|---|---|---|---|

| Authorization decision | Backend | Backend + gateway policy | Backend |

| File bytes served by | Backend | Gateway/S3 path | S3 |

| Client talks directly to S3 | No | Usually no | Yes |

| Fit for complex business rules | High | Medium to high | High at issuance time |

| Backend bandwidth cost | High | Low | Low |

| Operational complexity | Low | High | Medium |

| Ease of debugging | High | Low | Medium to high |

| Dependence on gateway features | None | High | None |

| Exposure window after approval | Request-scoped | Request-scoped | URL TTL |

| Good default for early-stage projects | Yes | No | Often yes |

Out of Scope but Relevant: AWS Security Token Service (STS)

The three patterns in this repository solve how an application mediates access to private S3 objects. They do not cover native storage permission models for granting a specific user access to a specific object for a limited time.

That is where technologies such as AWS Security Token Service (STS) become relevant (not part of the raw S3 API). They support finer-grained object-level, time-bound permissions. This matters for use cases such as:

- sharing one object with one specific user

- temporary access grants on individual files

- auditable collaboration around object-level permissions

- explicit revocation semantics beyond short URL expiry

Garage does not currently provide that capability in the same way. If your product requires first-class per-object permission grants, the architectural decision may no longer be only about access pattern. It may also be about storage capability.

Summary

The three approaches differ along two axes: where authorization is enforced and who transfers the object bytes.

- Backend proxy is the most direct and usually the best starting point when correctness, clarity, and application-specific authorization matter more than transfer efficiency.

- Presigned URLs are the usual next step when transfer volume increases and short-lived delegated access is acceptable.

- Gateway authorization is appropriate when a team already operates gateway-based policy infrastructure and is willing to accept higher implementation and operational complexity.

For most projects, the correct default is to start with the simplest model that is correct for the current product and team. Let things become a problem before you add complexity.

Companion repository: georg-schwarz/securing-s3-access